DDSP Piano: Neural Network Modelled Grand

US A new, exciting frontier in synthesis 10/11/23

|

It is not an understatement to say that "digital instruments and synthesizers have greatly impacted the way music is being composed, produced, and played and have thus participated in the shaping of new musical genres. Analog and digital synthesizers undoubtedly allowed for the exploration of new sounds, by producing them in very different manners compared to physical instruments."

These are the opening words from a new paper by researchers Lenny Renault, Rémi Mignot and Axel Roebel, they continue:

"Additionally, progresses made in instrument modeling have made it possible for more musicians to use the sounds of more acoustic instruments in a simplified way. Therefore, for these instrument models to be effective in the music-making process, they have to be easily controlled while accurately reproducing the subtle nuances of the modelled instrument sounds."

By using neural networks, researchers hope to create instrument models of incredible realism and nuance, outperforming the largest sample libraries. Such networks are modelled on how our human brains learn and "have shown to be capable of reproducing, manipulating, and understanding musical sounds in unprecedented ways."

On seeing the character "1", your brain will instinctively know this as a number. You won't need to compute this input; "Ah, a straight line with a slanted top, where have I seen this before? I think this is a number 1!", it instead happens instantly, because it belongs to a huge pool of information that we have learned, by trial and error, over time.

Neural networks connect various single concepts (in the case of synthesis, things like "brightness", "inharmonicity", etc) via "synapses". A variety of inputs are fed into the network and the little neurons learn by comparing disparities between them.

"These...components are designed to exhibit and to take advantage of known properties of audio signals, such as periodicity and harmonicity. As these strong priors on the sound structure are introduced, the amount of training data and model parameters can be significantly reduced."

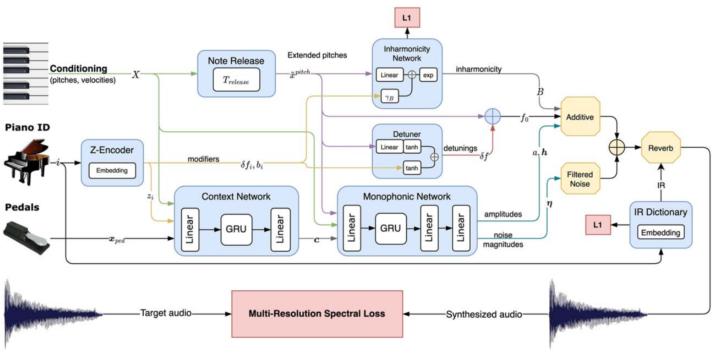

In this particular "Differentiable Digital Signal Processing (DDSP) framework", a spectral model has been created that makes use of wavetables, waveshapers, FM, infinite impulse response filters, parametric equalizers and artificial reverberation.

Researchers claim that this "makes for a lightweight, interpretable, and realistic-sounding piano model...Eventually, the proposed differentiable synthesizer can be further used with other deep learning models for alternative musical tasks handling polyphonic audio and symbolic data."

There's perhaps a comparison to be made with Roland's SA synthesis tech of the 80's, where a dedicated human team spent months crafting synthesized piano tones by resynthesizing base samples into harmonics, overtones and noises. This evolution just makes a computer do all of the groundwork.

Of course we've seen neural network synthesis before. Google's Nsynth Super debuted 5 years ago and raised eyebrows with it's WaveNet-style autoencoder synthesis, which was trained on 300k notes from 1000 instruments, and let the user morph between 4 of them via the touchscreen. There's no doubt that exciting possibilities lay ahead for DDSP, but Piano tuners don't have to hang up their rubber mutes just yet!

Find the full paper here: https://www.aes.org/e-lib/browse.cfm?elib=22231

Posted by MagicalSynthAdventure an expert in synthesis technology from last Century and Amiga enthusiast.

< More From: AES

- AES Europe 2023 Convention News 03-Apr-23

- AES Celebrates 75 Years Of Audio Innovation 13-Jan-23

- AES New York Convention News 01-Sep-22

- Audio For Virtual And Augmented Reality 08-Aug-22

- AES Music Production Academy Online Event 14-Apr-22

Even more news...

Want Our Newsletter?

More Stories:

More...

Older Music Machines & the People Who Still Use Them

Developments for Korg's instrument have been slow but promising.

Revisions that turned synths into brand new machines